Recent Projects:

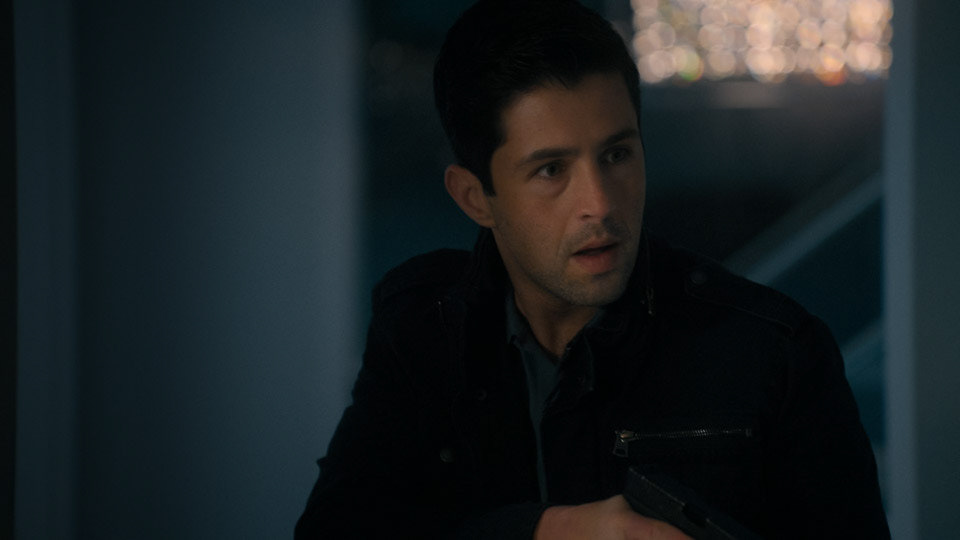

The Recruit - Season 2 - Netflix

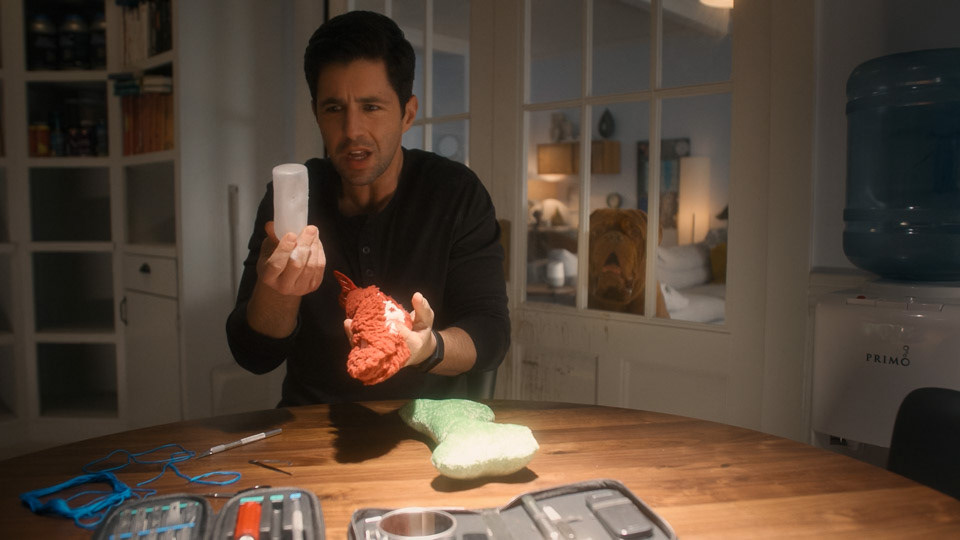

Resident Alien - Season 3 - Syfy

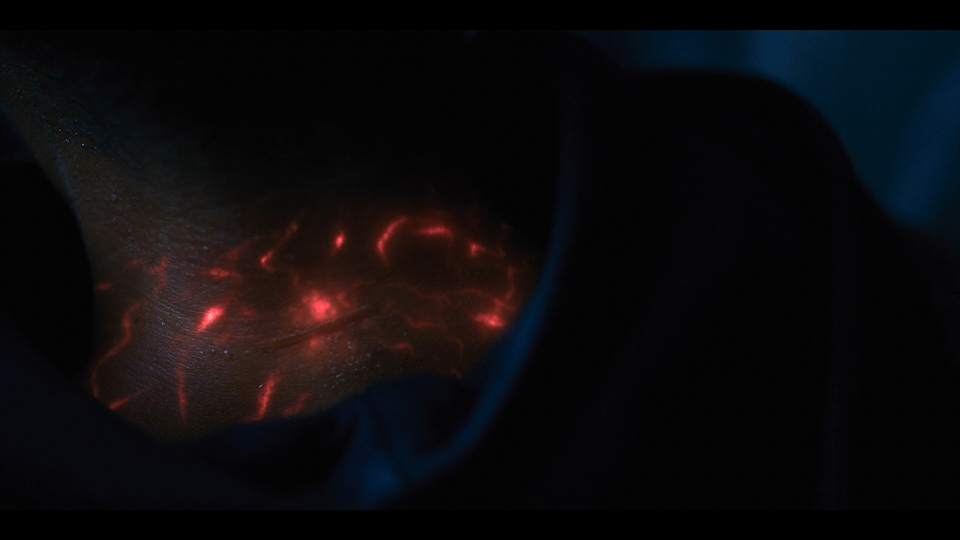

Yellowjackets - Season 2 - Showtime

Devil In Ohio - Netflix

The Midnight Club - Netflix

Turner & Hooch - Disney+

The Haunting of Bly Manor - Netflix

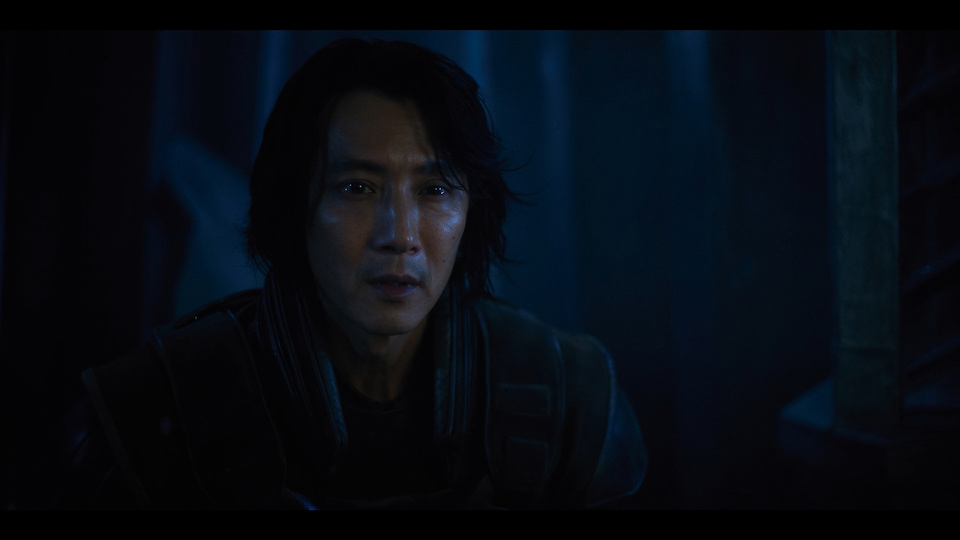

Altered Carbon - Season 2 - Netflix

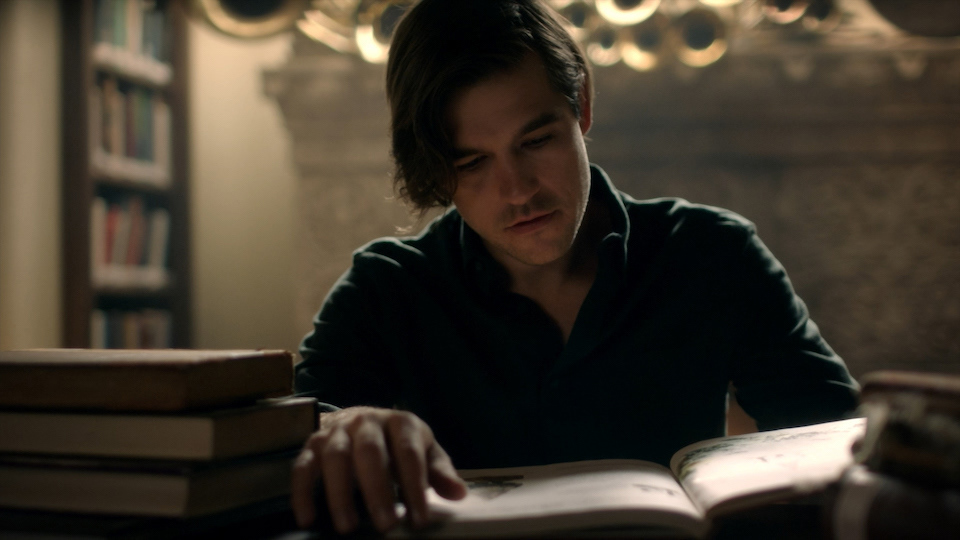

The Magicians - Seasons 4&5 - Syfy

The InBetween - Season 1 Pilot and EP107 - NBC

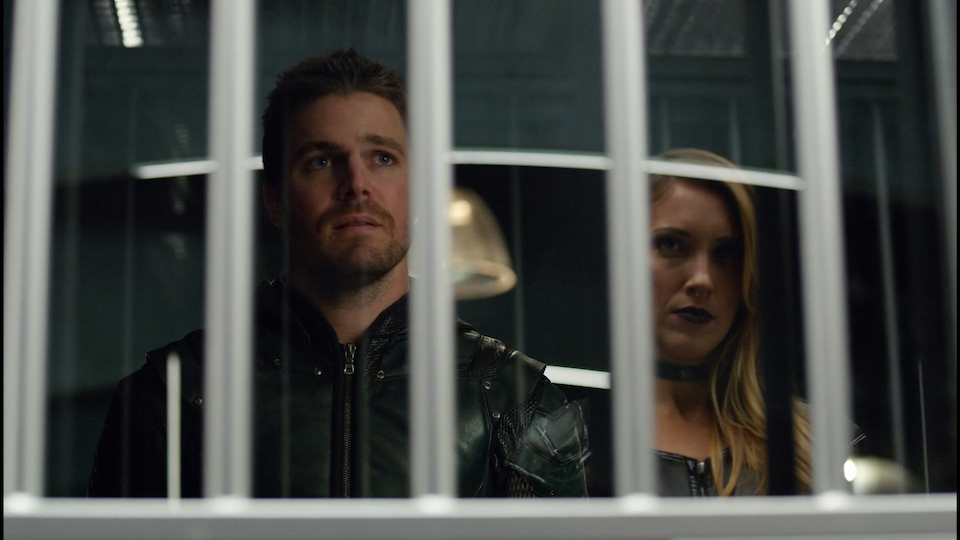

Arrow - Seasons 3,4,5 - CW

Blurt - Nickelodeon